Project Description

Motivation

The rise of deep learning, particularly large language models, has gained significant attention in NLP, but lexical-semantic resources (LSRs) remain crucial for tasks like machine translation and word sense disambiguation. However, many LSRs, such as wordnets, are heavily biased toward English due to reliance on the Princeton WordNet for translations. This bias results in incomplete or inaccurate resources for non-English languages, underrepresenting culture-specific concepts without direct English equivalents. Consequently, NLP systems face limitations in fully capturing linguistic diversity and the richness of human language.

Lexical untranslatability, or cross-lingual lexical gaps, poses a significant challenge in NLP as some words lack direct equivalents across languages. For example, the Arabic word خالة (“mother’s sister”) has no English counterpart, while “sibling” has no precise equivalent in Arabic or other languages like Italian. These gaps affect machine translation and lexical resource quality, as seen in Google Translate’s flawed Arabic أنجب ابن عمه توأما rendering of “his cousin gave birth to a twin“, where it incorrectly implies a male giving birth due to the lack of an Arabic equivalent for “cousin”. The issue is more pronounced in low-resource languages, which are often underrepresented in multilingual lexical resources, further deepening linguistic disparities.

The Problem

The development of diversity-aware datasets for multilingual lexical resources in NLP faces persistent challenges. Despite the critical need to address cross-lingual lexical gaps, there remains no systematic method for identifying these gaps, resulting in a scarcity of large-scale resources for lexical untranslatability. While progress has been achieved in specific domains such as kinship and color terminology, most fields remain underexplored, particularly for low-resource languages. These languages often lack comprehensive lexical databases, and automated approaches fail to capture their complexity, further exacerbating the digital divide in NLP applications. Moreover, most efforts are unidirectional, focusing predominantly on translations from English to other languages. This approach not only limits cross-linguistic understanding but also perpetuates English-centric biases, prioritizing English frameworks and neglecting the unique linguistic and cultural concepts of non-English languages.

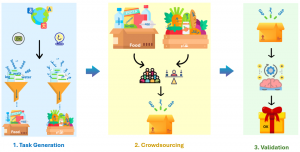

Figure 1

The Solution

This project introduces a systematic approach for creating diversity-aware datasets through a novel crowdsourcing method—a bottom-up approach that engages native speakers from the general public. Crowd workers compare lexemes between two languages, focusing on domains rich in lexical diversity, such as kinship and food. The LingoGap crowdsourcing website facilitates these comparisons via microtasks that identify equivalent terms, language-specific terms, and lexical gaps across languages.

This methodology enables bidirectional exploration of lexical diversity between any two languages—from the source language (SL) to the target language (TL) and vice versa. Unlike traditional methods, it does not rely on English as an intermediate (pivot) language, thereby avoiding biases that can distort linguistic diversity. By capturing lexical gaps and equivalent terms across languages, including low-resource ones, this scalable method provides a viable alternative to conventional approaches. It generates high-quality, diversity-rich data and supports application across diverse language pairs, contingent on the availability of bilingual native speakers.

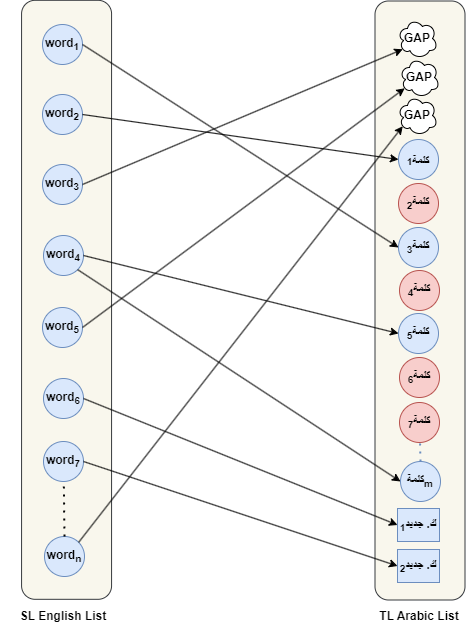

Figure 2

The initial inputs to our crowdsourcing methodology are an ordered source–target language pair and a specified semantic field. An overview of the crowdsourcing method is depicted in Figure 2. It includes three main steps:

- Task generation: A semi-automated process that generates two lists of lexical entries, one for each language, where each entry is a tuple (word, gloss), i.e., a term and its definition.

- Crowdsourcing: This step utilizes the LingoGap crowdsourcing website to compare lexical entries from the SL with corresponding TL entries. Native speakers assess these entries to determine translation equivalence or identify lexical gaps. LingoGap involves two primary roles: the task requester and the crowd worker. The task requester is responsible for the following critical functions: (1) constructing input datasets for both the SL and TL within the SF, (2) designing and creating crowdsourcing tasks, (3) overseeing task execution to ensure the quality of contributions, and (4) validating and exporting the finalized crowdsourced data. Additionally, the crowd worker’s role involves contributing to these tasks by identifying equivalent terms and lexical gaps in the TL.

- Validation: Lexical entries and gaps are evaluated automatically, followed by expert-based verification.

LingoGap Tool

Researchers can access the LingoGap website using the following links:

- Task Creation: Researchers (requesters) can create crowdsourcing tasks using this link: http://lingogap.disi.unitn.it/admin.jsp.

- Task Participation: Crowdworkers can complete crowdsourcing tasks via this link: http://lingogap.disi.unitn.it/.

How it works?

Step-by-step guidelines for using LingoGap are provided in this document, accessible via the following link: https://github.com/HadiPTUK/lingogap_instruction

Note: For assistance with importing source and target lists, uploading guidelines, or exporting the resulting data, please contact Hadi Khalilia, the project manager, via email at: hadi.khalilia@unitn.it.

Case Studies

Case studies are mainly divided into two categories:

- Bilingual Experiments: We conducted these experiments between language pairs in two semantic domains: kinship and food terminology.

- Monolingual Experiments: These experiments explore linguistic diversity by comparing language-independent concepts, such as kinship terms, with domain-specific words from a particular language.

Bilingual Experiments

Case Study 1: English vs Arabic ( Food )

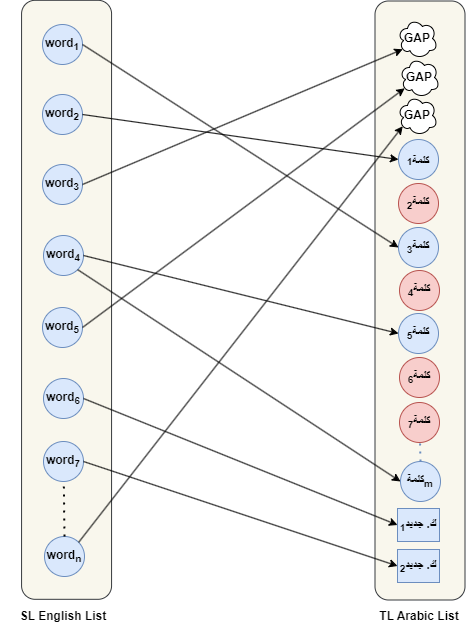

This study focused on the food domain and involved two experiments, each representing a different direction. The first experiment involved crowdsourcing lexical diversity from English to Arabic, identifying gaps and equivalent words in Arabic. The second experiment explored the reverse direction, from Arabic to English, identifying lexical gaps and equivalent terms in English.

Figure 3: Crowdsourcing experiments between SL and TL in two directions

Related Experiments

Case Study 2: Indonesian vs Banjarese ( Food )

This study focused on the food domain and involved two experiments, each representing a different direction. The first experiment involved crowdsourcing lexical diversity from Indonesian to Banjarese, identifying gaps and equivalent words in Banjarese. The second experiment explored the reverse direction, from Banjarese to Indonesian, identifying lexical gaps and equivalent terms in Indonesian.

Related Experiments

Case Study 3: English vs Persian ( Kinship )

This study focused on the kinship domain and involved two experiments, each representing a different direction. The first experiment involved crowdsourcing lexical diversity from English to Persian, identifying gaps and equivalent words in Persian. The second experiment explored the reverse direction, from Persian to English, identifying lexical gaps and equivalent terms in English.

Related Experiments

Case Study 4: Arabic vs Persian ( Kinship )

This study focused on the kinship domain and involved two experiments, each representing a different direction. The first experiment involved crowdsourcing lexical diversity from Arabic to Persian, identifying gaps and equivalent words in Persian. The second experiment explored the reverse direction, from Persian to Arabic, identifying lexical gaps and equivalent terms in Arabic.

Related Experiments

Case Study 5: English vs Turkish ( Kinship )

This study focused on the kinship domain and involved two experiments, each representing a different direction. The first experiment involved crowdsourcing lexical diversity from English to Turkish, identifying gaps and equivalent words in Turkish. The second experiment explored the reverse direction, from Turkish to English, identifying lexical gaps and equivalent terms in English.

Related Experiments

Monolingual Experiments

Case Study 1: Kinship concepts vs. Turkish

This experiment is a monolingual study that explores lexicalisations and lexical gaps by comparing language-independent kinship concepts with Turkish kinship terms. First, the kinship concepts were formalized using the UKC’s concept hierarchy, which includes the following subdomains: grandparents, grandchildren, siblings, uncles and aunts, nephews and nieces, and cousins. Second, a dataset of kinship concepts, comprising 197 kinship concepts defined in English, was collected from the UKC using the Exporter tool, a built-in UKC management service designed to extract data efficiently. For the Turkish dataset, which contains 38 terms, we employed a semantic filtering method based on a Python script to extract kinship terms from KeNet (the Turkish WordNet).

Four native Turkish speakers, proficient in English and selected through high-quality crowd selection criteria, participated as volunteers. They were assigned seven crowdsourcing tasks, each focusing on the kinship concepts of a specific subdomain, except for the “cousins” subdomain. For the “cousins” subdomain, two tasks were conducted due to its large number of concepts (56 concepts) included in this subdomain. The overall results of this experiment include the identification of 164 lexical gaps, 33 equivalent words, and four new kinship concepts in Turkish, with an average Alpha score of 0.88.

Related Experiment

Case Study 2: Kinship concepts vs. Persian

This experiment is a monolingual study that explores lexicalisations and lexical gaps by comparing language-independent kinship concepts with Persian kinship terms. First, the kinship concepts were formalized using the UKC’s concept hierarchy, which includes the following subdomains: grandparents, grandchildren, siblings, uncles and aunts, nephews and nieces, and cousins. Second, a dataset of kinship concepts, comprising 197 kinship concepts defined in English, was collected from the UKC using the Exporter tool, a built-in UKC management service designed to extract data efficiently. For the Persian dataset, which contains 38 terms, we employed a semantic filtering method based on a Python script to extract kinship terms from FarsNet (the Persian WordNet).

Three native Persian speakers, proficient in English and selected through high-quality crowd selection criteria, participated as volunteers. They were assigned seven crowdsourcing tasks, each focusing on the kinship concepts of a specific subdomain, except for the “cousins” subdomain. For the “cousins” subdomain, two tasks were conducted due to its large number of concepts (56 concepts) included in this subdomain. The overall results of this experiment include the identification of 163 lexical gaps and 34 equivalent words in Persian, with an average Alpha score of 0.85.

Related Experiment

Case Study 3: Kinship concepts vs. Maba

This experiment is a monolingual study that explores lexicalisations and lexical gaps by comparing language-independent kinship concepts with Maba kinship terms. First, the kinship concepts were formalized using the UKC’s concept hierarchy, which includes the following subdomains: grandparents, grandchildren, siblings, uncles and aunts, nephews and nieces, and cousins. Second, a dataset of kinship concepts, comprising 197 kinship concepts defined in English, was collected from the UKC using the Exporter tool, a built-in UKC management service designed to extract data efficiently. For the Maba dataset, which comprises 20 kinship terms, we employed a fieldwork-based data collection method, gathering terms directly from native Maba speakers.

Three native Maba speakers, proficient in English and selected through high-quality crowd selection criteria, participated as volunteers. They were assigned seven crowdsourcing tasks, each focusing on the kinship concepts of a specific subdomain, except for the “cousins” subdomain. For the “cousins” subdomain, two tasks were conducted due to its large number of concepts (56 concepts) included in this subdomain. The overall results of this experiment include the identification of 177 lexical gaps and 20 equivalent words in Maba, with an average Alpha score of 0.90. Further details about the crowdsourced lexicalisations and lexical gaps in Maba are provided in the link below.

Related Experiment

Acknowledgements

We sincerely thank Prof. Rusma Noortyani and Shandy Darma for coordinating the Indonesian-Banjarese experiments, Kubra Korkmaz for coordinating the Turkish experiments, and Arya Torabi for overseeing the Persian experiments. Their dedication and efforts were essential to the success of this project.

Publications and Research

Key Paper:

Hadi Khalilia, Jahna Otterbacher, et al. Crowdsourcing Lexical Diversity. 2024. arXiv: 2410.23133 [cs.CL]. url: https://arxiv.org/abs/2410.23133 (cit. on pp. 1–3, 6, 29, 30).

Project Team

Hadi Mahmoud Yousef Khalilia

Ph.D Student

University of Trento

Jahna Otterbacher

Associate Professor

Open University of Cyprus

Gabor Bella

Associate Professor

IMT Atlantique